Left: The AI That Closes Your Next Sale. Right: The AI That Keeps You in Business for the Next Decade

Executive Summary

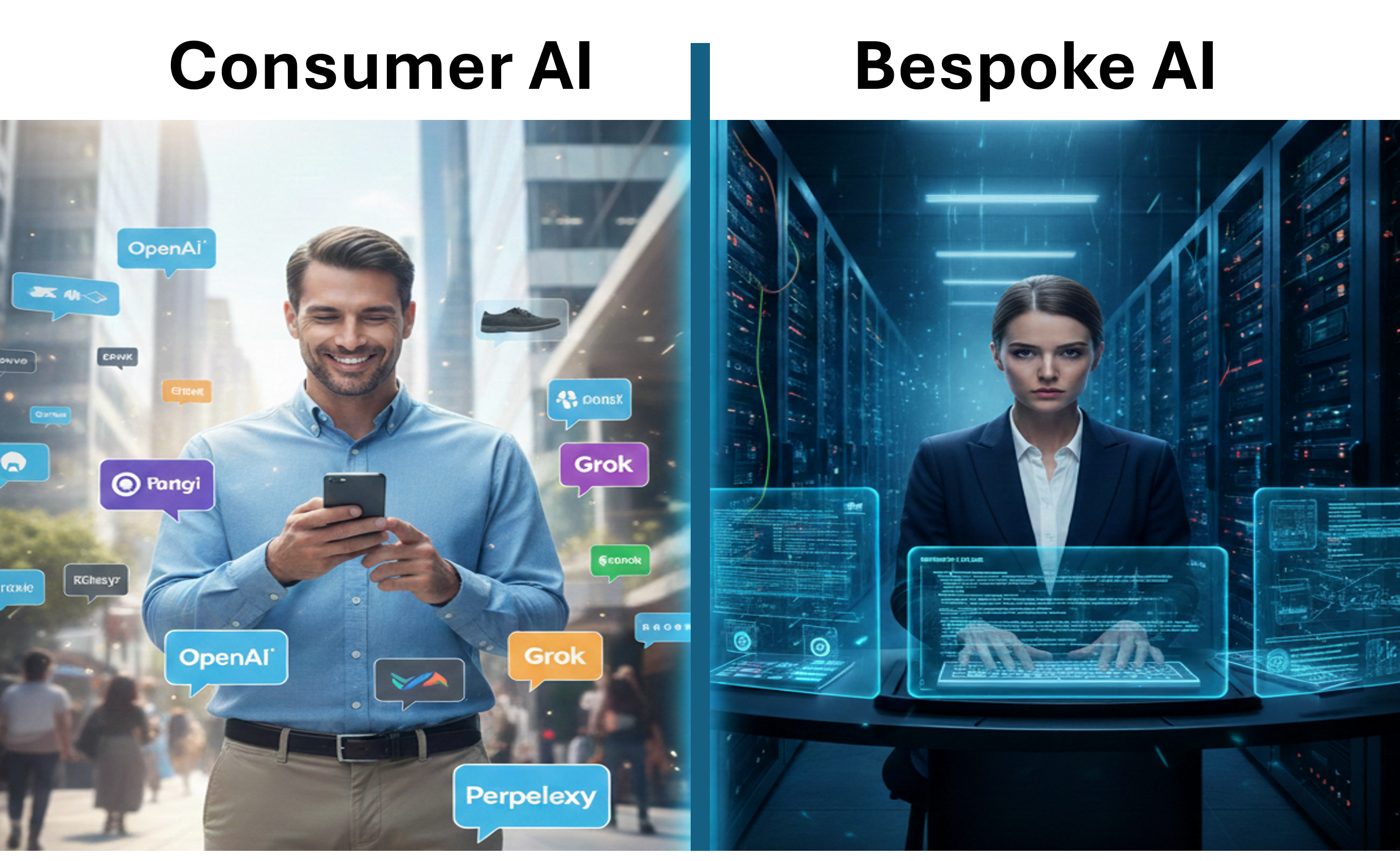

Two distinct AI layers exist in 2026: Customer-facing / end-user AI (what your customers see and interact with before buying) vs. Bespoke / internal enterprise AI (custom systems running behind the scenes to optimize your own operations)

Leaders must optimize both layers simultaneously — the customer-facing layer acts as the next generation of SEO and influences buying decisions in real time, while the bespoke/internal layer drives massive operational efficiency, cost reduction, and competitive moats

The real money concentrates in bespoke/internal AI — trillions in projected economic value, with enterprise generative AI spending already in the tens of billions annually, focused on custom integrations, fine-tuning, agentic workflows, and private AI factories

NVIDIA does not primarily compete against its customers — it dominates the infrastructure and enablement layer for bespoke AI (compute, full-stack platforms, AI factories), empowering hyperscalers and enterprises to build their own custom solutions rather than owning the final customer-facing applications

NVIDIA builds bespoke-enabling systems — DGX platforms, Enterprise AI Factories, NVL72 racks, NeMo customization tools, Omniverse, and industry blueprints accelerate enterprise-specific internal AI without NVIDIA taking over end-user app development

This positions NVIDIA, AWS, and Grok/xAI as key infrastructure choices for the high-ROI bespoke domain where enterprises create defensible advantages

Details

I've been receiving a steady stream of DMs with the same question: How can NVIDIA be such a dominant force in Enterprise AI when its biggest customers—Amazon, Google, Meta, Microsoft—are aggressively building their own custom chips and infrastructure? Isn't NVIDIA effectively competing against the very companies that buy billions of dollars of its hardware every year?

The question is excellent and gets to the heart of the current market structure. The answer lies in understanding that there are two fundamentally different categories of AI that every business leader must distinguish—and actively optimize—in 2026.

1. Customer-Facing / End-User AI — The Next Generation of SEO & Pre-Purchase Influence

This is the visible AI layer your prospects and customers encounter, often in the final moments before a buying decision.

Chat interfaces on brand websites

Personalized product recommendations in e-commerce

Voice assistants in mobile apps

Generative tools embedded in shopping or research experiences

This layer has become the modern equivalent of SEO — except it's far more dynamic and conversational. How your brand, products, pricing, reviews, and value proposition appear and perform inside ChatGPT, Gemini, Grok, Perplexity, Claude, and other frontier interfaces directly influences discovery, consideration, and conversion. Leaders who treat this as "set it and forget it" are already losing ground. Optimizing presence here requires constant monitoring, prompt engineering, structured data feeds, brand voice consistency, and sometimes dedicated agentic wrappers or API integrations to ensure your offering surfaces favorably in real-time AI-driven buying journeys.

2. Bespoke / Internal Enterprise AI — The Long-Term Operational Moat

This is the invisible, high-value AI that companies build and run internally to transform operations, costs, speed, accuracy, and decision-making.

Real-time custom agents orchestrating supply chains

Fine-tuned fraud/risk models processing proprietary transaction data

Domain-specific reasoning engines accelerating R&D in pharmaceuticals or materials science

Agentic workflows automating multi-step internal processes across departments

This area is very exciting to me as a business execution enthusiast. The opportunity to measure culture in real time, to understand the causes of variability (the mortal enemy of Core Execution), and the effectiveness of Dynamic Execution CPs will be incredibly valuable.

Enterprises themselves ultimately own and capture the value here—but they rely on foundational infrastructure to train, fine-tune, deploy, and scale these bespoke systems securely and at production levels. This is the category where the overwhelming majority of serious enterprise spending is concentrating—tens of billions annually today, with trillions in cumulative economic impact forecasted over the coming decade.

Successful leaders in 2026 are optimizing both layers in parallel:

Short/medium-term tactical wins come from mastering customer-facing AI to meet sales goals and protect/grow market share (next-gen SEO)

Long-term strategic advantage comes from making the right platform bet for bespoke/internal AI — choosing infrastructure that enables rapid, secure, scalable customization without creating massive future switching debt

NVIDIA's Position: Infrastructure & Enablement Powerhouse — Not End-User Competitor

NVIDIA's business is overwhelmingly focused on powering the bespoke/internal enterprise AI layer — not owning or competing in the customer-facing applications its hyperscaler customers dominate.

Key elements of NVIDIA's strategy in this space:

Full-Stack AI Factories & Supercomputing Platforms — NVIDIA Enterprise AI Factory validated designs, DGX SuperPODs, NVL72 rack-scale systems, and DGX Spark provide pre-integrated, purpose-built infrastructure for enterprises to create their own secure, high-performance AI factories — optimized for agentic, physical, and HPC workloads.

Customization & Deployment Tools — NVIDIA AI Enterprise software suite (NeMo for model customization, NIM microservices for optimized inference, Blueprints for reference agentic and RAG workflows) gives enterprises and partners the building blocks to rapidly develop bespoke internal solutions.

Industry-Tailored Enablement — Platforms such as Omniverse (industrial/digital twin simulations), Clara (healthcare), and integrations with partners like Siemens or Palo Alto Networks accelerate domain-specific bespoke AI.

NVIDIA sells the accelerated compute, networking, software ecosystem, and increasingly the pre-validated "factory" designs that let enterprises — and the clouds that serve them — build bespoke internal AI at scale. This complementary relationship is why NVIDIA remains indispensable.

Connecting to the Broader Series Themes

This infrastructure dynamic aligns with patterns we've tracked throughout the series:

Execution velocity under constraint wins long-term

Deep moats in foundational layers endure

Platform choices are multi-year, high-stakes bets with enormous switching costs

Bespoke/internal enterprise AI is where the highest-ROI, most defensible value is being created right now. That is precisely why I continue to view NVIDIA as the foundational compute and bespoke-enablement leader, Grok/xAI as the high-execution, frontier-model wildcard ideally suited for custom deployments, and AWS as a proven, flexible cloud choice for scaling bespoke workloads (with growing Grok integration via Marketplace).

The flood of DMs reflects a broader realization: today's AI decisions are no longer about selecting a shiny chatbot — they are about dual optimization: mastering customer-facing AI for immediate sales impact and making the right long-term infrastructure bet for internal bespoke transformation.

If this distinction is top-of-mind for your organization — or if you're actively evaluating dual-layer roadmaps — feel free to comment or DM. I'm always glad to exchange perspectives on how these layers are playing out in practice.

Please share this post if you found the distinction between the two AI layers helpful — it helps more leaders see the full picture.

This is not investment advice. All views are personal and for discussion purposes only.